Jingguang Li

jingguangli2001 AT gmail.com. My name can be pronounced Lee Kyung-kwang in Korean.

Years may wrinkle the skin,

but to give up enthusiasm wrinkles the soul.

Hi! 👋 My name is Jingguang Li (李景光). I am currently a Master’s student in Artificial Intelligence at the State Key Laboratory of IoTSC, University of Macau. I will be joining the Cho Chun Shik Graduate School of Mobility, Korea Advanced Institute of Science and Technology as a PhD student in the Fall of 2026, working under the supervision of Dr. Heye Huang.

I expect to earn my M.Sc. in 2026 under the guidance of Prof. Chengzhong Xu and Prof. Li Li. Before this, I received my B.Eng. in Software Engineering from Harbin Institute of Technology in 2024.

I am actively open to research discussions and industry connections! Feel free to email me if you are interested in collaborating or sharing ideas.

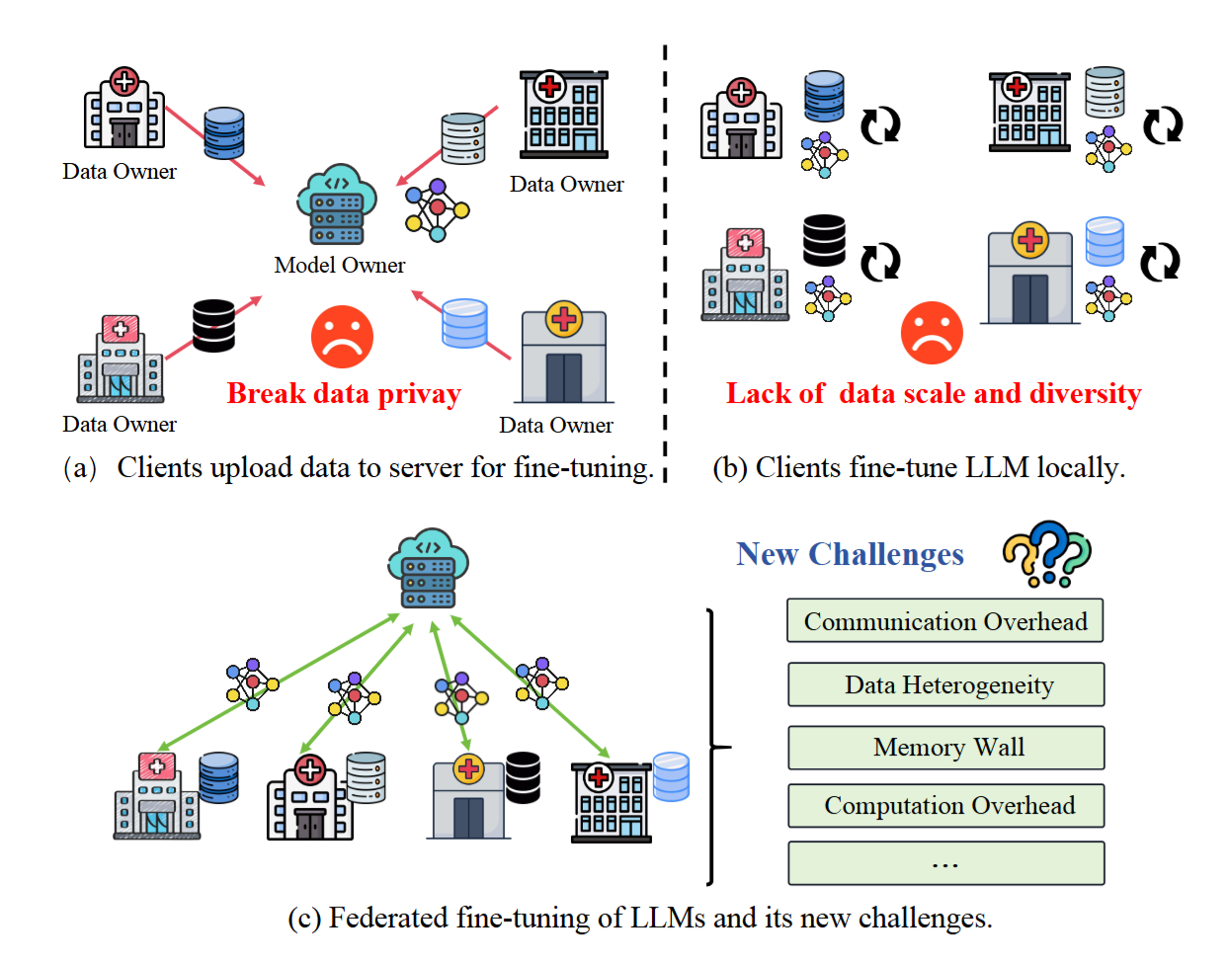

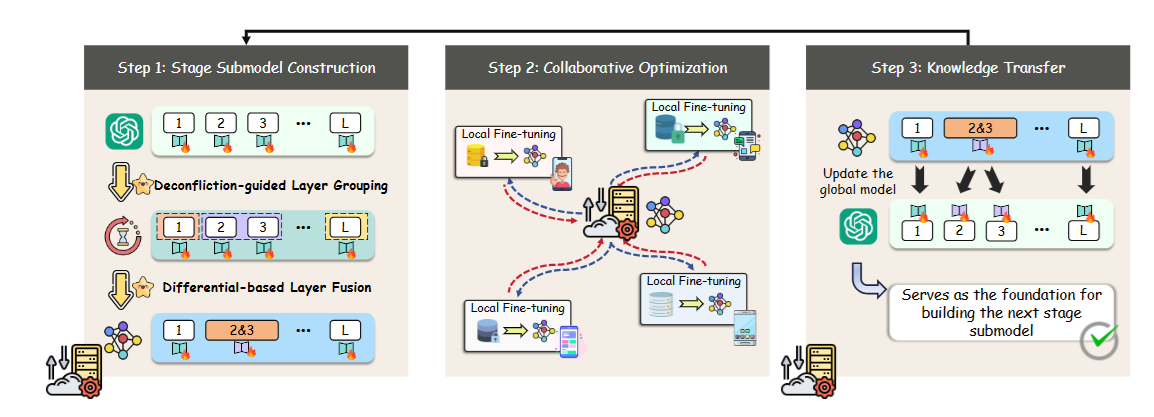

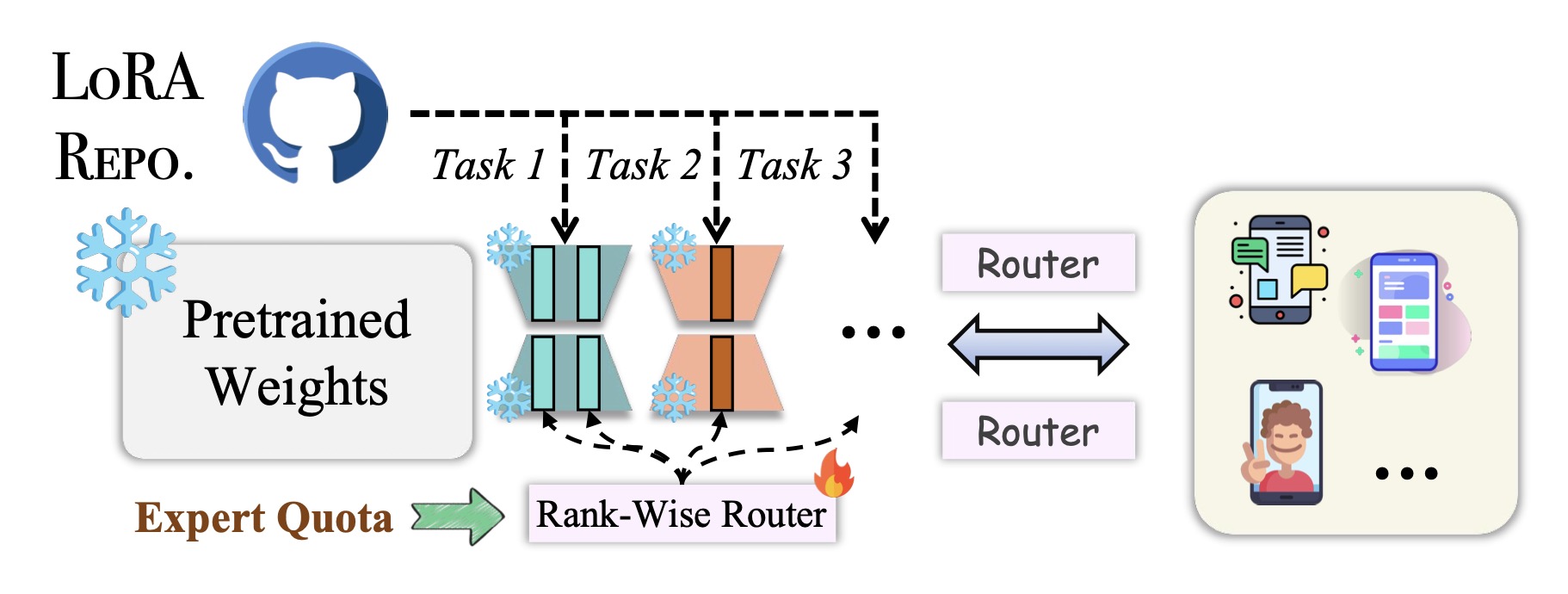

I am interested in natural language processing and machine learning, with a particular focus on the efficient post-training of large language models (LLMs) under critical memory, communication, and compute constraints. My research also focuses on mitigating hallucinations in multimodal LLMs to address perceptual deficiencies. Moving forward, my research interests are expanding into the application of LLM agents.

news

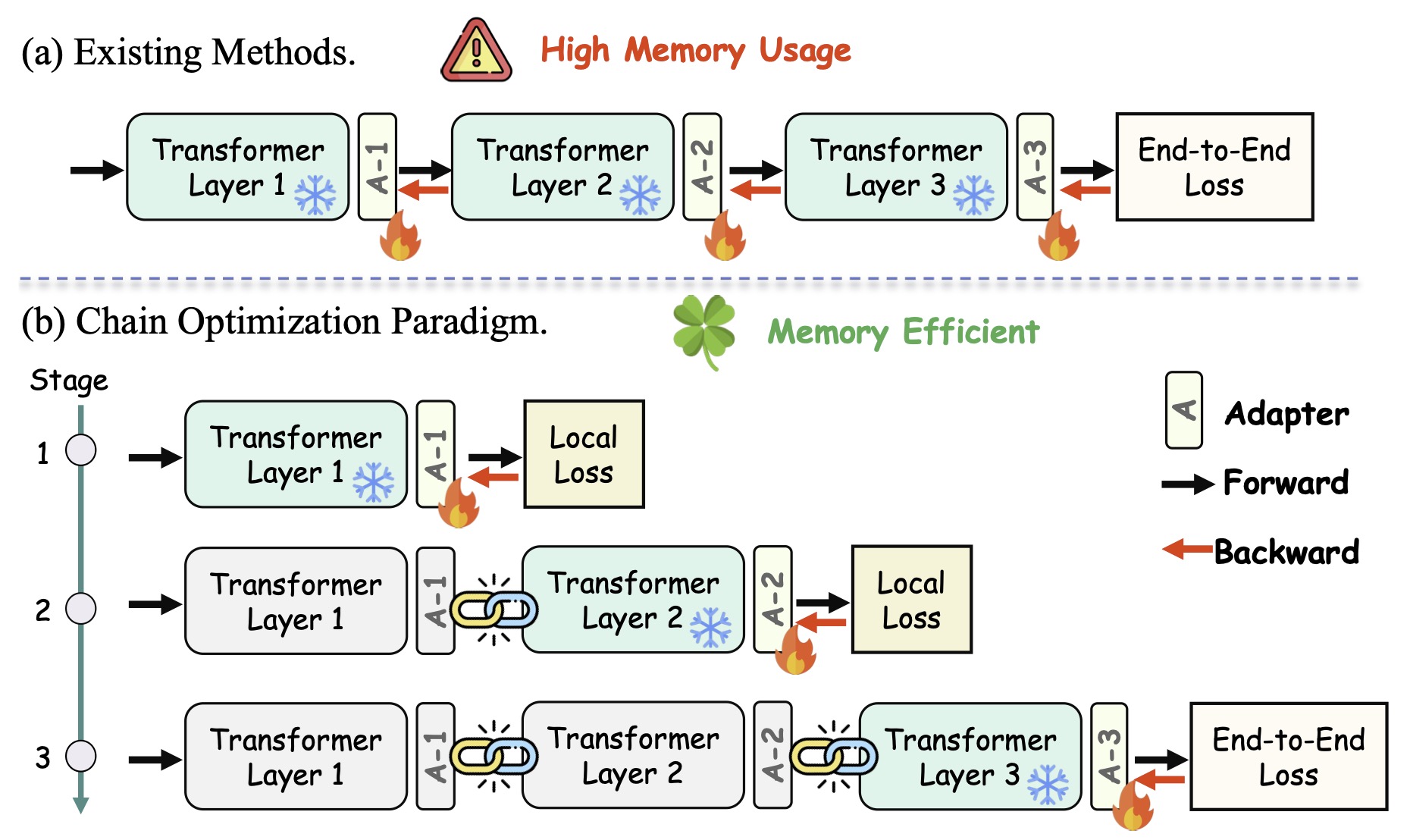

| Apr 06, 2026 | Our paper ChainFed is accepted by ACL 2026 Jan 🎉 |

|---|---|

| Mar 18, 2026 | I am going to join the Graduate School of Mobility, KAIST as a PhD student in the Fall of 2026, working under the supervision of Dr. Heye Huang 😊 |

| Feb 13, 2026 | Our survey is accepted by TMLR 🎉 |

| Jan 26, 2026 | Our paper DevFT is accepted by ICLR 2026 🎉 |